Use PKS Enterprise on VMware SDDC and Pure Storage

Pivotal Container Services (PKS) provides a deeply integrated Kubernetes (k8s) architecture for the VMware SDDC. It is a joint engineering project from VMware and Pivotal. In my conversations with Pure Storage customers or potential customers around Kubernetes I often get asked about how Pure Storage can help a PKS Enterprise environment. The good news is there is a very easy path to utilizing k8s with Pure + VMware + PKS.

The Architecture

Using Pure with PKS is actually very straight forward. Since Pure FlashArray is already leading choice for all VMware environments it is not anything out of the ordinary to support PKS.

Understanding the underlying technology that integrates PKS into VMware you may soon realize that highly reliable, stateless and shared storage is the best choice when deploying PKS.

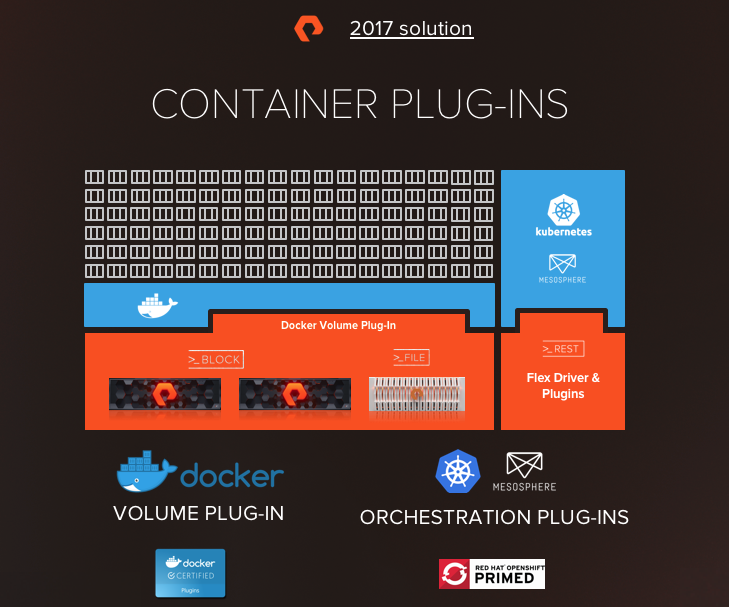

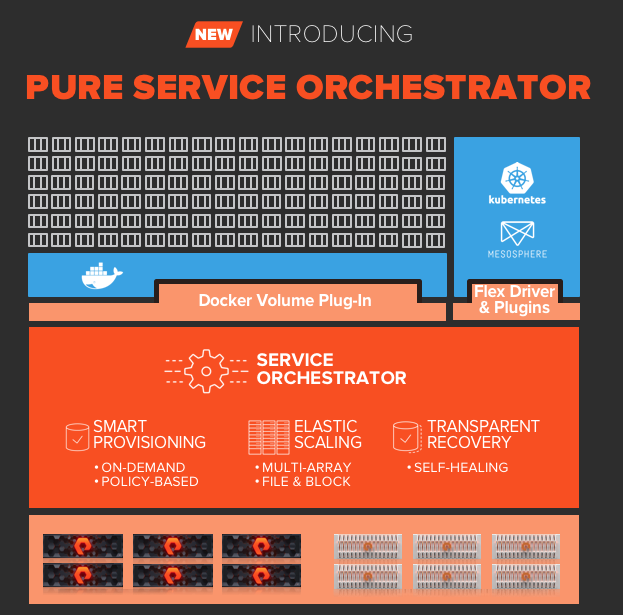

The choice between drivers (shown in the graphic above) to deliver the Storage is up to you. The vSphere Cloud Provider provides automated creation and management of the virtual disks presented to containers in PKS. This supports the use of vVols and enables great possibilities for your PKS environment. Pure Service Orchestrator utilizes a direct connection to Pure Storage FlashArrays, FlashBlades and Cloud Block Stores. It is installed with a single Helm command or Kubernetes Operator. It includes Smart Provisioning in order to place volumes on the most optimal storage device in your fleet.

The choice of which tool will be dictated by your workload. It is not an exclusive choice either. It is easy to do both. After VMworld I hope to publish the details on how to install PSO on PKS. If you have really good github search foo you may be able to find the bosh deployment.

Highly Reliable

Pure Storage has measured 6×9’s of uptime across its customer base. Many storage solutions for container environments will require hours of planning and weeks of proper implementation to provide high availability. Do not spend time re-architecting your storage infrastructure for PKS. Spend your time delivering k8s to your customers so they can deliver innovation for your business. Use the Pure Storage devices you already have. You may not even need a whole new dedicated array (don’t tell sales I said that).

Stateless Arrays for Stateful Data

Migrating data should be eliminated from your daily tasks. As FlashArrays move further into the future where data always stays in place. The ability to keep the data in place for multiple hardware generations is a proven benefit of Pure. Migrating persistent storage in k8s even on VMware is a non-trivial task. Depending on your scale this could take weeks of planning and careful flawless execution to accomplish non-disruptively. The underlying hardware should not be a concern for delivering applications. Pure Storage has made this a reality since the FlashArray debut 7 years ago.

Shared Storage

Delivering highly reliable data across multiple PKS and vSphere clusters, allowing applications to failover if the compute in an availability zone becomes unavailable, is key to delivering a cloud experience for your k8s rollout. While the Pure sales teams would gladly help you acquire a FlashArray per vSphere cluster hosting PKS this is simply un-needed for nearly all situations. Especially as you start on your Kubernetes journey.

But Why PURE?

Simple; vVols on the FlashArray combined with the PKS integration with vSphere enables mobility of data and freedom unavailable on a legacy datastore. Have a group that rolled their own k8s? FlashArray can clone their persistent data instantly into PKS using vVols. Need to copy data from a bare metal (non-VM) k8s cluster to PKS? Pure vVols makes this possible. Have multiple k8s clusters within PKS today that require the same data for test/dev/prod Pure Storage enables this nearly instantly. Pure Storage FlashArray Snapshots and Clones move at the speed of an API call from any of our SDK’s from Python to Powershell to Ansible to Terraform and more to give you an easy way to fit Pure Storage into your Infrastructure as Code tools.

You can probably spend the next 5 hours reading blogs and papers of all the other benefits of Pure Storage and they all apply to your PKS on vSphere environment but I wanted to provide a few examples directly related to operating PKS on Pure.

VMworld 2019 Session

In my session for VMworld in San Francisco I will demonstrate how Pure Storage is able to instantly migrate persistent volumes from “other” k8s clusters to PKS. Make sure you make it to this session if you considering PKS.